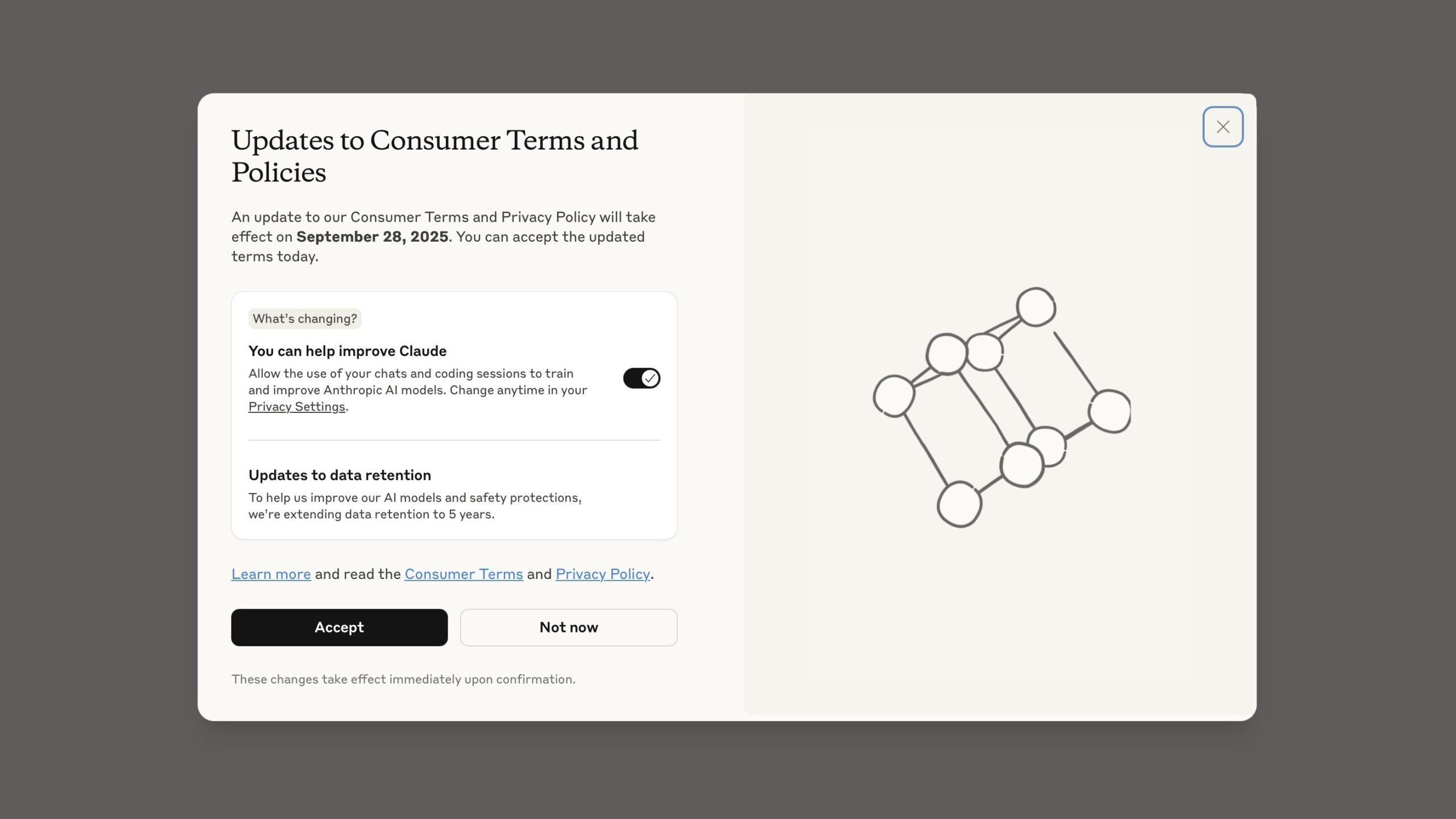

Now Reading: Protect Your Conversations from Being Used to Train AI Models

-

01

Protect Your Conversations from Being Used to Train AI Models

Protect Your Conversations from Being Used to Train AI Models

Quick summary:

- Anthropic, an AI company, has been using user conversations to train its AI model named Claude.

- Claude is designed as a conversational assistant and trained on vast datasets for better performance in natural language understanding and generation.

- Training processes incorporate anonymized interactions from users to improve the system’s capabilities over time.

- The initiative aligns with global trends where companies use real-world interaction data for AI optimization.

Indian Opinion Analysis:

The development of conversational assistants like Claude powered by user input highlights the evolving field of artificial intelligence globally, including its ethical dimensions like user data anonymity and trustworthiness. For India, which is rapidly embracing digitization and fostering emerging technologies under initiatives such as digital India, this indicates the increasing need for robust regulations surrounding data privacy and AI ethics. As Indian companies innovate in similar spaces, balancing technological growth with ethical standards will be crucial to maintain public trust without hindering progress.